The question getting asked in every architecture review

Every few weeks, a new model launch pushes context windows further. A million tokens is now common. Ten million tokens exists in production models. And every time the number grows, the same question surfaces in engineering reviews across the industry: do we still need RAG?

The instinct is tempting. RAG pipelines are complex — embeddings, vector stores, rerankers, chunking strategies, metadata filters — and if you can just dump the entire corpus into a long-context call, why not skip all that machinery? Pay the tokens, get the answer, move on.

The reality, as always, is more nuanced. Long context doesn't eliminate the need for RAG. It changes when RAG is the right tool and when it isn't — and the teams that understand that distinction are building better systems than the teams stuck arguing about which approach "wins."

Where long context actually shines

Long context is the clear winner in a specific set of situations:

Single-session reasoning over bounded content

When a user uploads a document, a codebase, or a dataset and wants to ask several questions about it, long context is almost always better than RAG. The model sees everything, the reasoning spans the full content naturally, and there's no retrieval step to get wrong. The cost per session is higher, but so is the quality ceiling.

Multi-document synthesis

Tasks that require comparing or combining information across documents are notoriously hard for RAG. Retrieval pulls chunks in isolation, losing the cross-document relationships. Long context keeps everything in scope, letting the model reason across documents as a single whole.

Where retrieval quality is the bottleneck

If your RAG pipeline has precision problems — if the right chunks aren't consistently in the top-k — long context sidesteps the problem by making retrieval unnecessary. This is sometimes faster than fixing the retrieval layer.

Where RAG is still the right answer

Long context is powerful, but it isn't free. There are entire classes of problems where RAG dominates, and will keep dominating:

Very large corpora

A million tokens is a lot. It's also nowhere near enough to hold an enterprise knowledge base. When your total content is larger than any context window can fit — and for most serious applications, it is — you need retrieval. No amount of context growth changes that.

High-volume, cost-sensitive workloads

Long context costs scale with input tokens. A customer service bot handling a million queries a day can't afford to put 500K tokens of context into every request, even at aggressively discounted rates. RAG lets you send only the few hundred tokens that matter, per request. At volume, the cost difference is enormous.

Freshness requirements

RAG pipelines can refresh their index incrementally. Long-context systems that rely on pasting content into the prompt need to reassemble that content on every request, from whatever source holds the current version. For frequently changing data, the retrieval layer is the thing that makes currency tractable.

Precision-critical fact lookup

When the answer to a question is in a specific sentence of a specific document, you want the model to see that sentence prominently — not buried in a million-token haystack. Long-context retrieval is measurably worse at this than a well-tuned RAG pipeline, despite headlines claiming otherwise. Models still have "lost in the middle" problems at scale.

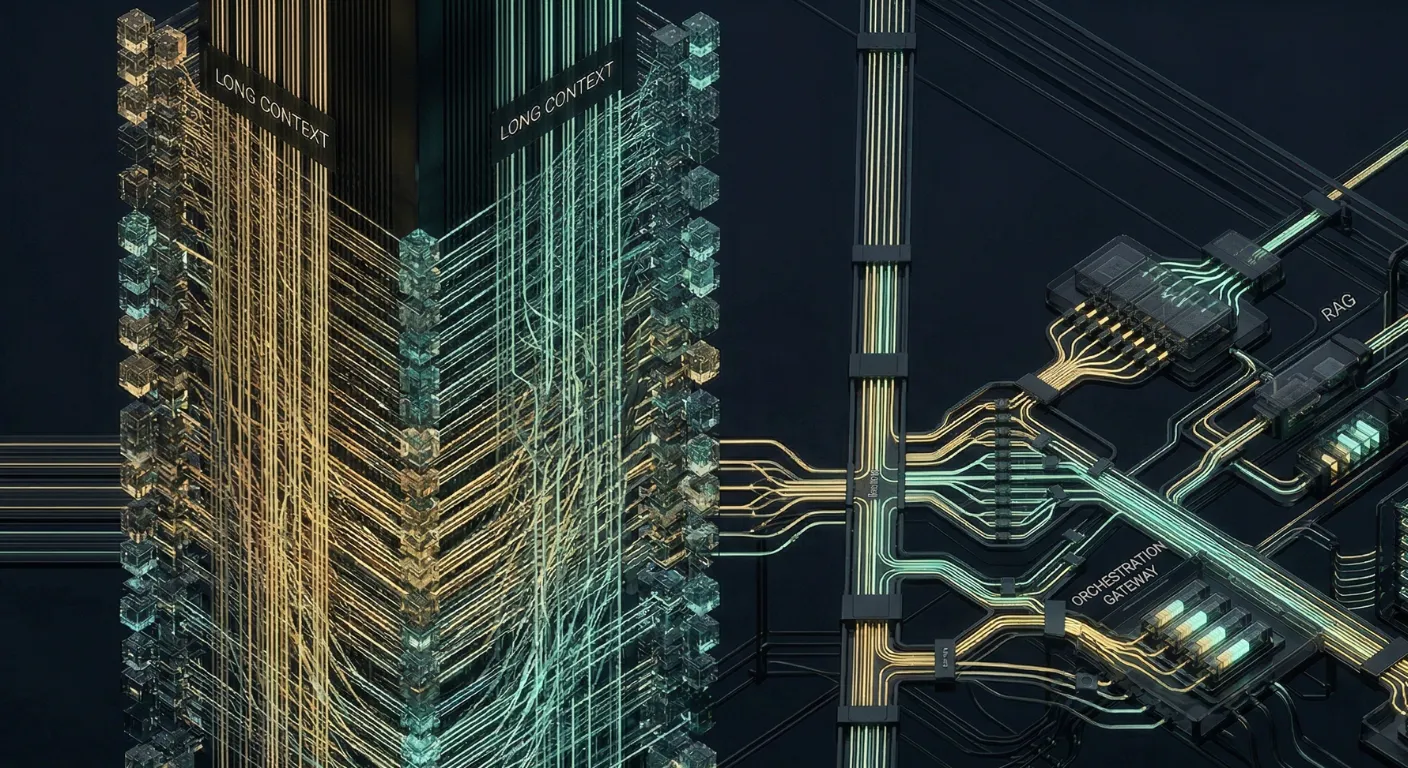

The hybrid architectures that are winning

The most effective systems we've seen aren't picking one approach. They're combining them:

RAG-to-long-context escalation

Start with RAG for the first attempt. If the model's confidence is low, or if the question clearly requires broader context than the retrieved chunks provide, escalate to a long-context call with a larger document set. Most queries are answered cheaply by RAG; only the hard ones pay the long-context cost.

Long-context summarization feeding RAG

Use long context to build better indexes. A long-context model reads through a document or transcript once, generates structured summaries and metadata, and stores them in a vector store. The RAG layer then retrieves from those enriched representations. This is slower to build but produces dramatically better retrieval quality than chunking raw content.

Context windows as working memory

Treat the long context as the model's working memory for a session — populated from RAG on demand, but kept warm across turns. Retrieval happens when new information is needed; the long context holds the running conversation, the user's goals, and the content already pulled in. This is the pattern that's becoming standard in agent architectures.

RAG isn't dying because context windows are growing. It's becoming one layer in a stack that also includes long context, prompt caching, and context engineering. The question isn't which to use — it's which to use where.

The real decision criteria

When a team asks me whether they should use RAG or long context, the question I actually try to answer with them is:

- How big is the relevant corpus per request?

- How much does a single request need to cost at scale?

- How often does the underlying content change?

- How precisely does the answer need to map to specific source content?

- How cleanly can the task be bounded to a single session?

The answers to those questions don't give you "RAG" or "long context." They usually give you a hybrid — and that hybrid is almost always better than either pure approach. The teams still debating which one wins are asking the wrong question.