Why chunking matters so much

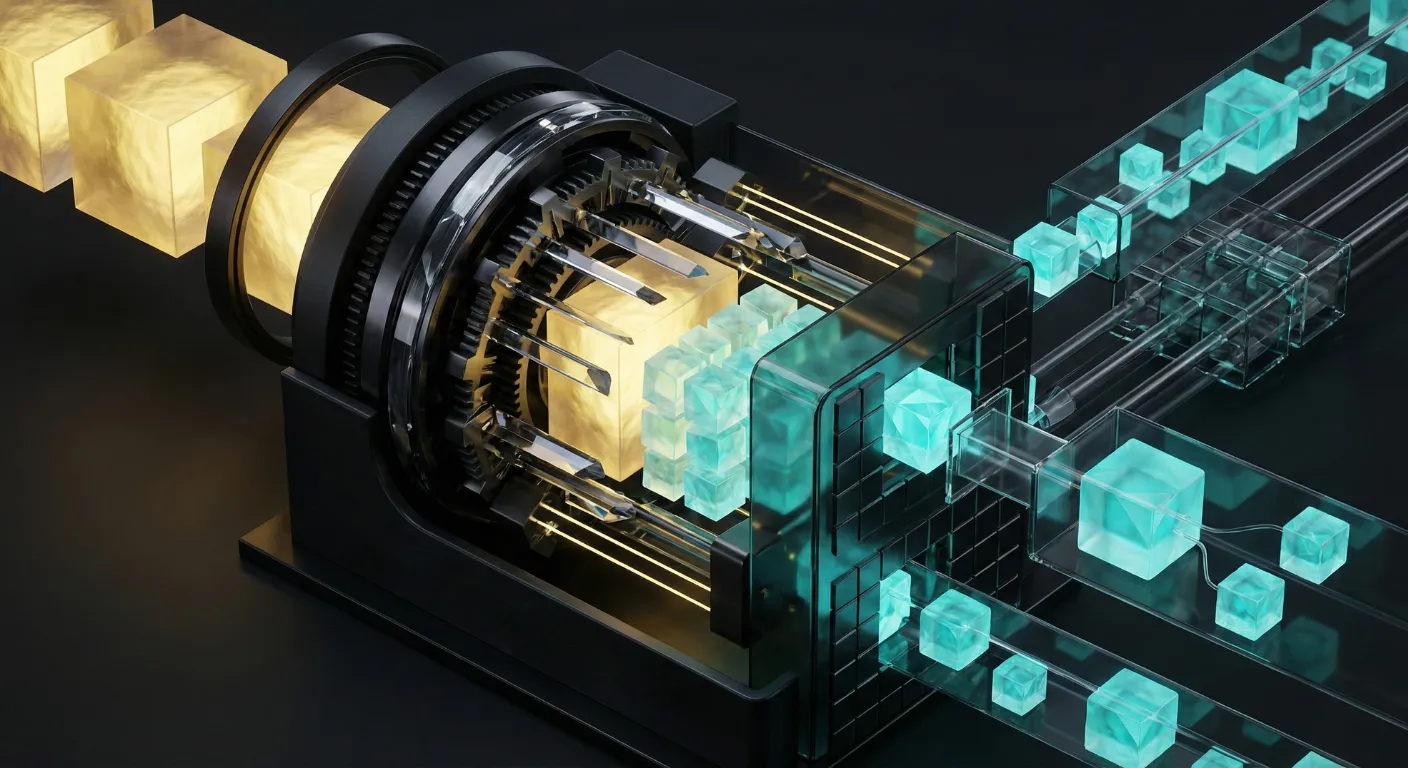

In a RAG system, chunking is the process of splitting source documents into pieces that can be individually embedded and retrieved. It seems like a minor preprocessing step. It isn't.

Chunking determines what the retrieval system can find. If a relevant piece of information spans two chunks, neither chunk alone contains the full answer, and the model may hallucinate or say it doesn't know. If chunks are too large, the embedding becomes a noisy average of multiple topics, and retrieval precision drops. If they're too small, you lose context.

Getting chunking right is the single highest-leverage optimization most RAG teams can make — and it requires surprisingly little code.

The common strategies

Fixed-size chunking

Split the document into chunks of a fixed token count (e.g., 512 tokens) with overlap between consecutive chunks (e.g., 50 tokens).

This is the simplest approach and works surprisingly well as a baseline. The overlap ensures that information near chunk boundaries isn't completely lost. Most teams should start here and only move to more sophisticated strategies when they've identified specific quality problems that chunking improvements can solve.

Recursive character splitting

Split on natural boundaries — paragraphs first, then sentences, then words — with a target chunk size. This preserves semantic units better than fixed-size splitting because it avoids cutting mid-sentence or mid-paragraph.

This is the default recommendation for most use cases. It's slightly more complex than fixed-size splitting but produces meaningfully better retrieval quality because chunks tend to be semantically coherent.

Semantic chunking

Use an embedding model to detect topic boundaries within the document, splitting where the semantic content shifts. This produces the most semantically coherent chunks but is significantly more expensive (requires embedding every sentence or paragraph to detect boundaries) and harder to tune.

Use this when your documents contain multiple distinct topics and you need high retrieval precision. The overhead is justified for knowledge bases where quality matters more than indexing speed.

Document-structure-aware chunking

For structured documents (HTML, Markdown, PDFs with headers), split along the document's own structure: sections, subsections, paragraphs. This preserves the author's intended logical units and typically produces the most meaningful chunks.

This is the best approach when your documents have reliable structure, but requires parsing logic for each document format.

The parent-child pattern

One of the most effective advanced patterns: embed small chunks (for precise retrieval) but retrieve the larger parent chunk (for sufficient context to the LLM).

The implementation: split documents into small chunks (e.g., 256 tokens), embed these for retrieval, but maintain a mapping to their parent chunk (e.g., the full section or a 1024-token window). When a small chunk is retrieved, pass the parent chunk to the model.

This gives you the best of both worlds: precise retrieval (because small chunks produce focused embeddings) and rich context (because the model sees the surrounding information).

Chunk size: the critical variable

The optimal chunk size depends on several factors:

- Embedding model capacity — Most embedding models are optimized for inputs up to their max length (typically 512–8192 tokens). Performance often peaks well below the maximum.

- Query type — Short, specific queries are best served by smaller chunks. Broad, conceptual queries benefit from larger chunks.

- Information density — Dense technical content (API docs, specifications) works better with smaller chunks. Narrative content (articles, reports) works better with larger chunks.

A practical approach: test chunk sizes of 256, 512, and 1024 tokens on your evaluation set and measure retrieval quality at each. The optimal size is rarely the one you'd guess.

Metadata enrichment

Chunks should carry metadata that helps both retrieval and the LLM:

- Source document — Title, author, date, URL

- Position — Section header, page number, document position

- Hierarchical context — The heading structure above the chunk (e.g., "Chapter 3 > Section 3.2 > Subsection 3.2.1")

This metadata enables filtered retrieval ("find information from documents published after 2024") and helps the model cite its sources accurately.

Common chunking mistakes

- No overlap between chunks — Information at boundaries is lost. Always use at least 10% overlap with fixed-size chunking.

- Ignoring document structure — Splitting a table across two chunks makes both chunks unusable. Preserve structural elements.

- One strategy for all document types — Technical documentation, legal contracts, and blog posts have very different structures. Adapt your chunking strategy to the content.

- Not evaluating chunking quality — The only way to know if your chunking is good is to measure retrieval quality. Run retrieval evaluations with different chunking strategies and compare.

Chunking isn't sexy, but it's the foundation. Get it right and everything downstream gets better.