The split that's now standard

Look at the model lineup from any major provider in mid-2026 and you'll see the same pattern: a "standard" tier and a "thinking" tier. GPT-5.4 ships as Standard, Thinking, and Pro. Anthropic's lineup splits along the same lines. Gemini does too. Even open-source releases like Gemma 4 and Nemotron 3 distinguish reasoning-tuned variants from their general-purpose counterparts.

This split isn't marketing. It reflects a real architectural divergence in how the models work — and a real choice that every team building on LLMs now has to make on a per-feature basis. The teams that make this choice well save substantial money and latency. The teams that default to one or the other across the board leave a lot of value on the table.

What "reasoning" actually means under the hood

A reasoning model isn't a fundamentally different architecture from a standard LLM. It's the same kind of transformer, trained with additional techniques that encourage it to generate intermediate thinking steps before producing a final answer. The model essentially writes a scratchpad to itself, often hidden from the user, and uses that scratchpad to work through the problem more carefully than a one-shot response would allow.

The result is dramatically better performance on tasks that benefit from deliberation — multi-step math, complex code generation, planning, logical puzzles. The cost is also dramatic: reasoning models typically generate 5–20x more tokens per response than their standard counterparts, and the latency is correspondingly higher. A response that takes 800ms from a standard model can take 15 seconds from a reasoning variant on the same prompt.

Where reasoning models clearly win

Hard math and quantitative reasoning

This is the cleanest win. On benchmarks involving multi-step calculation, formal reasoning, or word problems, reasoning models routinely outperform standard models by 20–40 percentage points. If your application involves financial modeling, scientific computation, or anything where the answer needs to be derived rather than recalled, reasoning models are usually worth the cost.

Complex code generation

For non-trivial code — anything involving multiple files, careful state management, or subtle algorithmic choices — reasoning models produce noticeably better output. They catch their own bugs in the thinking phase, consider edge cases the standard model would miss, and structure code more carefully. For routine code (CRUD endpoints, boilerplate, simple transformations), the standard model is fine and much faster.

Planning and decomposition

Tasks that require breaking down a goal into subgoals — research questions, project planning, multi-step workflows — benefit substantially from the deliberation phase. The model uses the scratchpad to consider alternatives before committing to a plan, which is exactly what you want.

Where standard models are the better choice

Anything latency-sensitive

This is the obvious one but it's worth being explicit. A reasoning model in an interactive chat interface is a frustrating experience. Users tap their fingers. They lose flow. They navigate away. If your feature needs sub-2-second responses, a reasoning model is almost never the right answer — even if it would produce a slightly better response.

Pattern-matching and recall

Many of the things people use LLMs for don't actually require reasoning. Summarization, classification, translation, format conversion, sentiment analysis, simple Q&A over retrieved documents — these are pattern-matching tasks that a standard model handles just as well as a reasoning model, in a fraction of the time.

High-volume workloads

The cost gap matters here. A standard model serving a million requests a day at $0.005 per request costs $5,000. A reasoning model serving the same volume at $0.05 per request costs $50,000. If the quality difference doesn't translate into materially better outcomes for your users, you're paying ten times the price for a vanity upgrade.

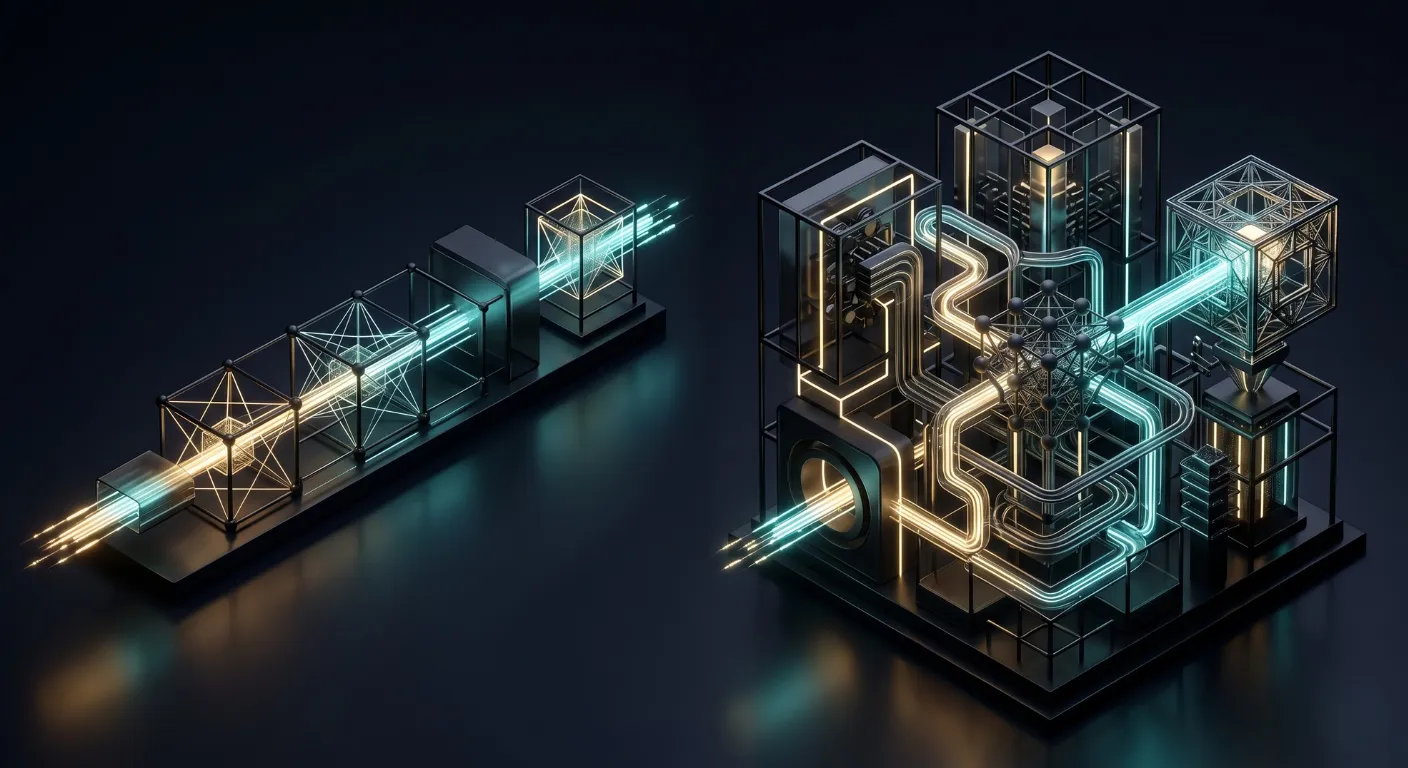

The hybrid pattern that's emerging

The most interesting architectures we've seen don't choose between reasoning and standard models. They use both, routed by task type:

- Standard model for the front end — handles user interaction, generates immediate responses, manages the conversation flow

- Reasoning model for the back end — invoked asynchronously for hard problems, returns results that get incorporated into the next user-facing response

This is a particular case of the model routing pattern that's becoming standard, and it's where the real value of the reasoning/standard split shows up. The user doesn't wait for the slow model. The slow model does the hard work in the background and the fast model presents the result.

The right question isn't whether reasoning models are better. It's whether your task is one where deliberation helps more than speed hurts. Most user-facing tasks are not.

A decision rubric

When you're about to ship a new AI feature, ask these three questions in order:

- Does the task have a single correct answer that's hard to derive? If yes, lean toward reasoning. If no, lean toward standard.

- Is the user waiting in real time for the response? If yes, lean toward standard. If no, reasoning is on the table.

- At your projected volume, can you afford the cost difference? Multiply the per-request cost gap by your expected monthly requests. If the number is uncomfortable, you have your answer.

If all three answers point to reasoning, go reasoning. If they're mixed, the hybrid pattern is probably your friend. If they all point to standard, don't be talked out of it by benchmark hype — the standard model is the right tool, and the cost savings will fund features your users actually notice.