The inevitable component

Every team that builds something non-trivial on top of LLMs goes through the same progression. You start with direct API calls from your application. Then you add a retry wrapper. Then a timeout wrapper. Then you need to log requests for debugging, so you add a logging layer. Then a second model provider for fallback. Then cost tracking per team. Then rate limiting so the batch job doesn't starve the interactive traffic.

Six months in, you look at your llm_client.py and realize you've built a gateway — badly, and without meaning to. Every team arrives at this component eventually. The teams that get there intentionally end up with a clean, centralized layer. The teams that grow one by accident end up with the same functionality sprinkled across five microservices, half-implemented, inconsistent, and nobody's favorite thing to maintain.

What an LLM gateway does

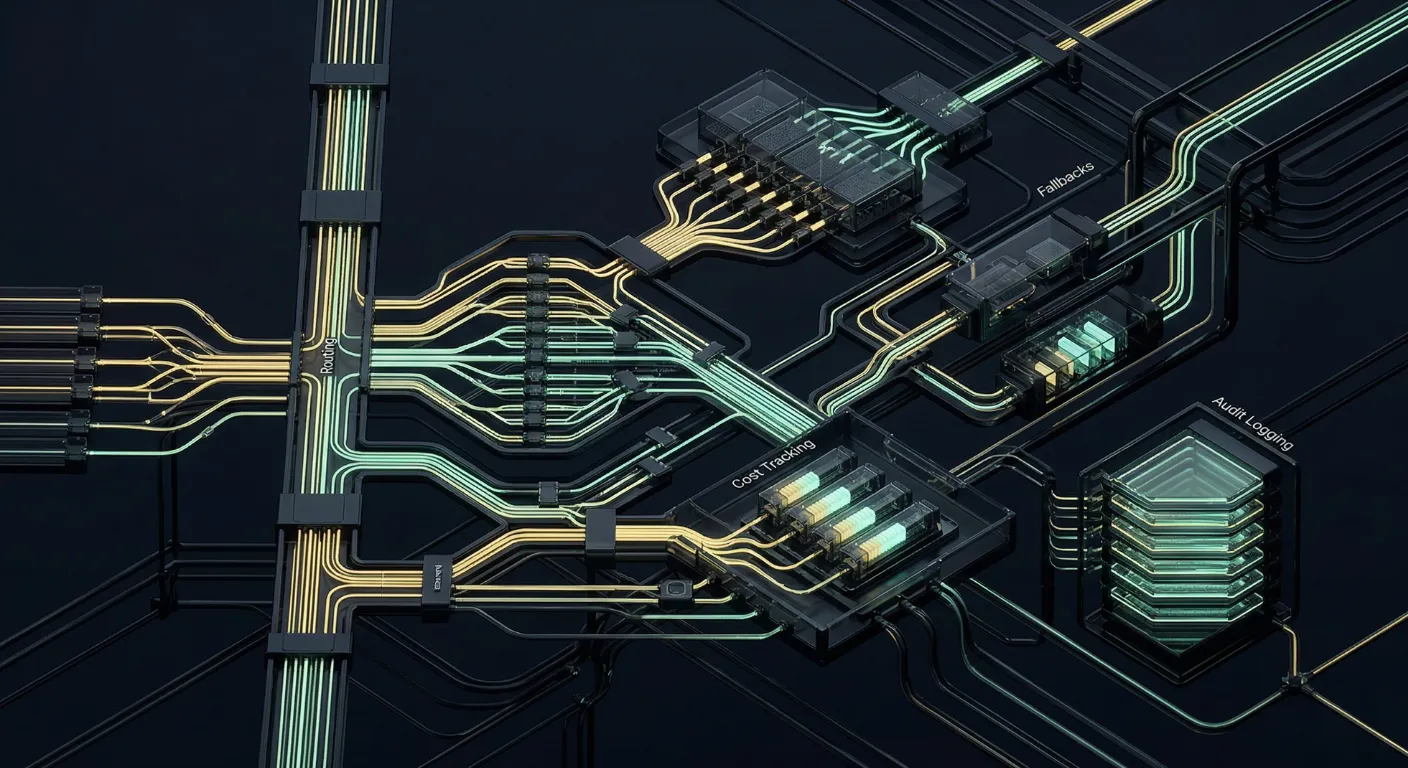

A gateway is a single service that sits between your application code and every LLM provider you use. All model traffic flows through it. In exchange, it handles:

- Provider abstraction — a unified interface across OpenAI, Anthropic, Google, Mistral, and self-hosted models

- Routing — deciding which model serves a given request based on policy

- Fallbacks — automatically retrying on a different provider when the primary fails or rate limits

- Rate limiting and quotas — per-team, per-user, per-endpoint

- Cost tracking — attributing spend to the team, feature, or customer that generated it

- Caching — both exact-match and semantic caching where appropriate

- Audit logging — full request/response capture for debugging, compliance, and eval replay

- Redaction — stripping PII or secrets from prompts before they leave your network

- Response streaming — proxying streaming responses without buffering the whole thing

This is a lot of functionality. You don't need all of it on day one, but you'll need most of it within a year.

Why this matters more in 2026

The case for a gateway has gotten stronger in the past year, for three reasons.

First, nobody runs on a single model anymore. The good teams use a frontier model for hard problems, a smaller model for routine ones, and a self-hosted model for privacy-sensitive flows. Without a gateway, every application service needs to know about every provider, and every change touches every service.

Second, model churn is constant. New models launch every few weeks. Pricing changes. Capabilities shift. Teams that have centralized their model access through a gateway can evaluate and roll out new models with config changes. Teams that haven't are doing pull requests across their codebase every time something changes.

Third, cost visibility has become a board-level concern. When LLM spend is a line item in the company P&L, finance wants to know which product, which team, and which customer is driving it. A gateway is the only practical place to get that data reliably.

Build versus buy

The commercial LLM gateway space has matured. Several open-source and managed options exist, each with different tradeoffs. Before building, honestly ask:

- Do your requirements differ meaningfully from what existing gateways offer?

- Is the marginal engineering cost of maintaining a custom gateway worth the marginal flexibility?

- Are you willing to own the on-call burden of a critical path component?

For most teams, an existing gateway — open-source or managed — is the right starting point. You can always replace it later once you understand your own requirements. Building from scratch makes sense when you have unusual routing logic, strict data residency requirements, or an existing platform that the gateway needs to integrate deeply with.

What to watch out for

Don't make it a bottleneck

A gateway is a critical path component. If it goes down, your LLM features go down with it. Design it for high availability from the start: stateless, horizontally scalable, with health checks and graceful degradation. A gateway that becomes a single point of failure is worse than no gateway at all.

Don't let it become a god object

It's tempting to keep adding functionality to the gateway until it handles prompt templating, eval running, retrieval, and everything else LLM-related. Resist. A gateway should do one thing well: route model traffic. Other concerns belong in other components, or the gateway becomes impossible to change safely.

Measure what it's actually doing

Instrument the gateway heavily. Latency per provider, error rates per model, cache hit rates, cost per route. The gateway is your best vantage point for understanding how your entire LLM layer behaves. If you're not looking at those metrics regularly, you're flying blind.

The gateway isn't glamorous. Nobody is going to talk about it at a conference. But it's the component that, once in place, makes every other part of your LLM stack easier to reason about, cheaper to operate, and faster to change.

A minimal starting point

If you're retrofitting an existing system, start with the smallest version that solves a real problem: a unified client library that wraps the providers you use, logs every request, and supports one or two models behind feature flags. That's enough to get value. You can grow from there once the pain points reveal themselves — and they will, quickly, once you have the instrumentation to see them.